The blocks should be persisted on disk so that the node can work with them after rebooting. DAG is persisted as well, but only block metainfo is stored there and potentially large parts of the block (i.e. its body containing deployed terms) are not included into block metainfo. However, occasionally the deployed terms are required in the Casper protocol. For example, creating a new block involves choosing non-conflicting tips which require comparing their deployed terms on intersection. Hence, a place where full blocks are persisted is required so that Casper could make lookups in it.

The purpose of this document is to provide a specification of such a block on-disk storage which follows the existing BlockStore API and have a potential to store tens of millions of blocks.

The design goals of the block storage include:

The existing API for block storage is represented by BlockStore trait.

trait BlockStore[F[_]] {

def put(blockHash: BlockHash, blockMessage: BlockMessage): F[Unit]

def get(blockHash: BlockHash): F[Option[BlockMessage]]

def find(p: BlockHash => Boolean): F[Seq[(BlockHash, BlockMessage)]]

def put(f: => (BlockHash, BlockMessage)): F[Unit]

def apply(blockHash: BlockHash)(implicit applicativeF: Applicative[F]): F[BlockMessage]

def contains(blockHash: BlockHash)(implicit applicativeF: Applicative[F]): F[Boolean]

// Should be deleted after designing the new block store implementation

def asMap(): F[Map[BlockHash, BlockMessage]]

def clear(): F[Unit]

def close(): F[Unit]

} |

Its only implementation is LMDBBlockStore which plainly stores blockhash-blockbody pairs in LMDB.

The proposed implementation will reuse the existing trait for API, but asMap() method should be removed from it as it would be harmful to use such a method once the number of blocks will surpass several thousands.

The proposed implementation LMDBIndexBlockStore of the existing API is a combination of an index backed up LMDB and a file containing an arbitrarily ordered sequence of block bodies.

final class LMDBIndexBlockStore[F[_]] private (

lock: MVar[F, Unit],

indexEnv: Env[ByteBuffer],

indexBlockOffset: Dbi[ByteBuffer],

blockBodiesFileResource: Resource[F, RandomAccessFile]

)(implicit syncF: Sync[F])

extends BlockStore[F] {

private[this] def withReadTxn[R](f: Txn[ByteBuffer] => R): F[R]

def get(blockHash: BlockHash): F[Option[BlockMessage]] =

for {

_ <- lock.take

result <- blockBodiesFileResource.use { f =>

withReadTxn { txn =>

Option(indexBlockOffset.get(txn, blockHash.toDirectByteBuffer))

.map(_.getLong)

.map { offset =>

f.seek(offset)

val size = f.readInt()

val bytes = Array.fill[Byte](size)(0)

f.readFully(bytes)

BlockMessage.parseFrom(ByteString.copyFrom(bytes).newCodedInput())

}

}

}

_ <- lock.put(())

} yield result

def find(p: BlockHash => Boolean): F[Seq[(BlockHash, BlockMessage)]]

def put(f: => (BlockHash, BlockMessage)): F[Unit]

def clear(): F[Unit]

def close(): F[Unit]

}

object LMDBIndexBlockStore {

final case class Config(

indexPath: Path,

indexMapSize: Long,

blockBodiesPath: Path,

indexMaxDbs: Int = 1,

indexMaxReaders: Int = 126,

indexNoTls: Boolean = true

)

def apply[F[_]: Sync: Concurrent: Monad](config: Config): F[LMDBIndexBlockStore[F]]

} |

An instance of LMDBIndexBlockStore can be created by invoking LMDBIndexBlockStore.apply provided by the LMDBIndexBlockStore.Config configuration containing the path and parameters of LMDB index and path to the block bodies file. It invokes the LMDBIndexBlockStore constructor with the following arguments:

lock - An instance of cats.effect.concurrent.MVar which is used as a mutex for synchronizationindexEnv - LMDB environment created by using the provided LMDB configurationindexBlockOffset - LMDB database interface created by using the provided LMDB configurationblockBodiesFileResource - A random access file (which is derived from the provided configuration) where the block bodies are stored wrapped into cats.effect.Resource to capture the effectul allocation of the resourceAn example implementation of get method can be seen in the code block above. It acquires lock and accesses blockBodiesFileResource's internal RandomAccessFile. After that, get looks up the offset of the corresponding block body and seeks to this position in block bodies file. The first 4 bytes at this position are an integer size representing the length of the body. Reading the following size bytes and parsing them into BlockMessage results in the requested block.

Other operations defined in BlockStore API can be implemented in the similar fashion.

LMDB index is the only data structure which contributes to memory usage as LMDB uses memory-mapping. One key-value pair in LMDB index consists out of 32 bytes of block hash and 8 bytes of file offset. Hence, 40 bytes are needed to store one key-value pair and 1 GiB of RAM is able to hold almost 27M pairs. However, this does not include the overhead by LMDB for storing B+ tree layout which according to several benchmarks is quite high and can reach ~70%. After taking this in consideration, the approximate amount of pairs 1 GiB can handle is ~16M.

The calculations for LMDB index memory allocation are also true for its disk space usage (including the overhead).

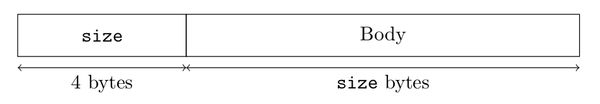

Block bodies are variable in size and it is impossible to calculate how much disk space they use up and the proposed solution's overhead is only 4 bytes (length of the body in bytes) per block body which add only 1 GiB of overhead every ~270M blocks.

Storing blockhash-bodyoffset pairs is delegated to LMDB. A single pair's structure is shown on the following figure.

Block bodies are stored in the specified file and written in arbitrary order. A header containing the size of the body preceded the actual content of the body as shown on the following figure.

Gets, finds and puts require acquiring the lock and hence mutually exclusive.